Track real-time GPU and LLM pricing across all cloud and inference providers. Compare instances, models, performance, and pricing to make better infra decisions.

Founder

Screenshots

About

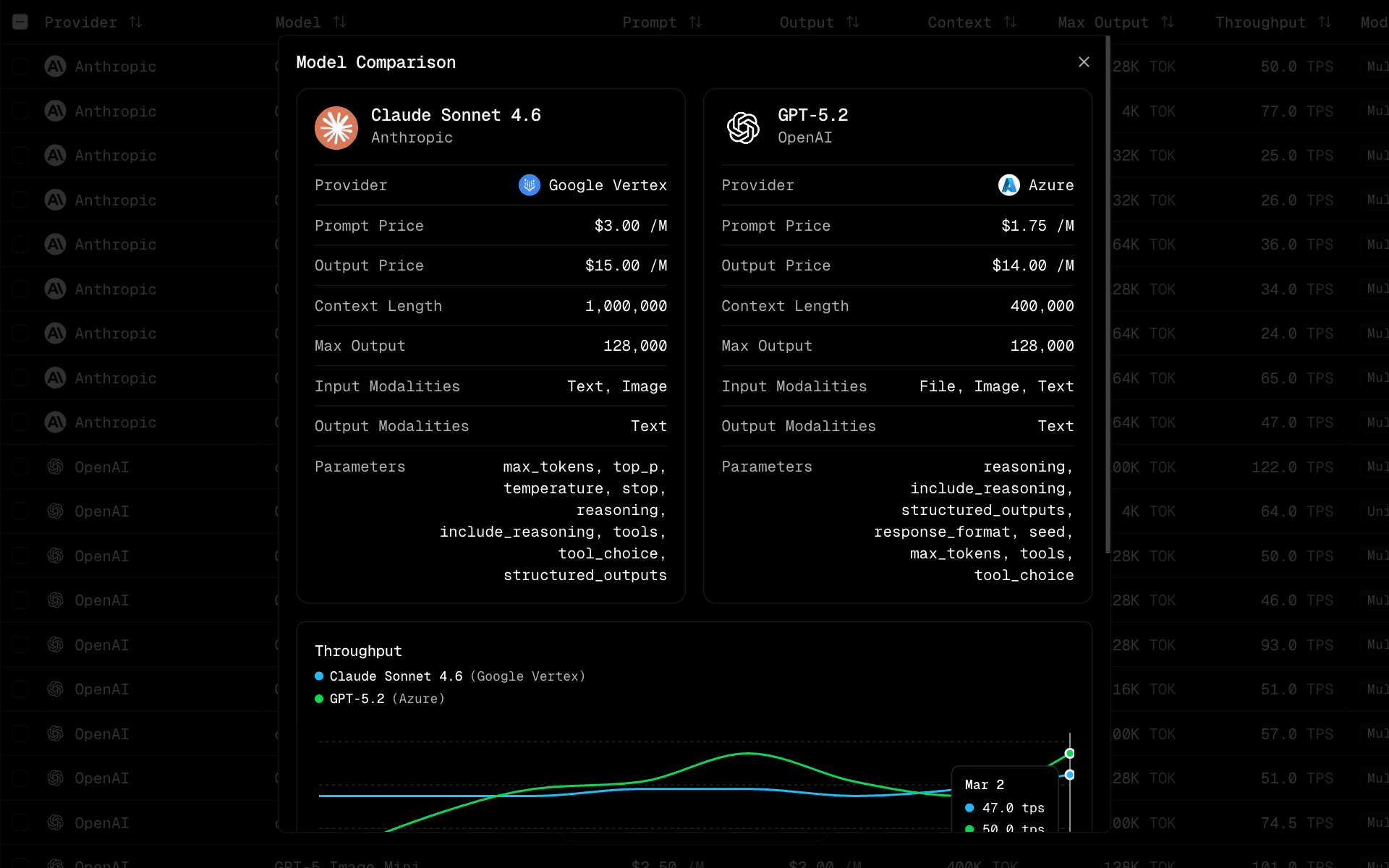

Are you tired of the constant guesswork involved in scaling your AI infrastructure? Managing costs for GPU clusters and large language model (LLM) inference across various cloud providers can feel like navigating a maze blindfolded. Deploybase cuts through that complexity, offering you a unified, real-time dashboard that brings clarity to the often opaque world of cloud compute pricing. Imagine instantly seeing how the cost to run a specific model on AWS compares to Azure, Google Cloud, or specialized inference platforms, all updated live as market conditions fluctuate. This isn't just about seeing a price tag; it's about gaining the strategic advantage needed to optimize your budget without sacrificing performance. We built Deploybase because we understand that every dollar saved on infrastructure is a dollar that can be reinvested into innovation, model training, or expanding your user base. Stop relying on outdated spreadsheets or manually checking multiple vendor websites; start making data-driven decisions that directly impact your bottom line.

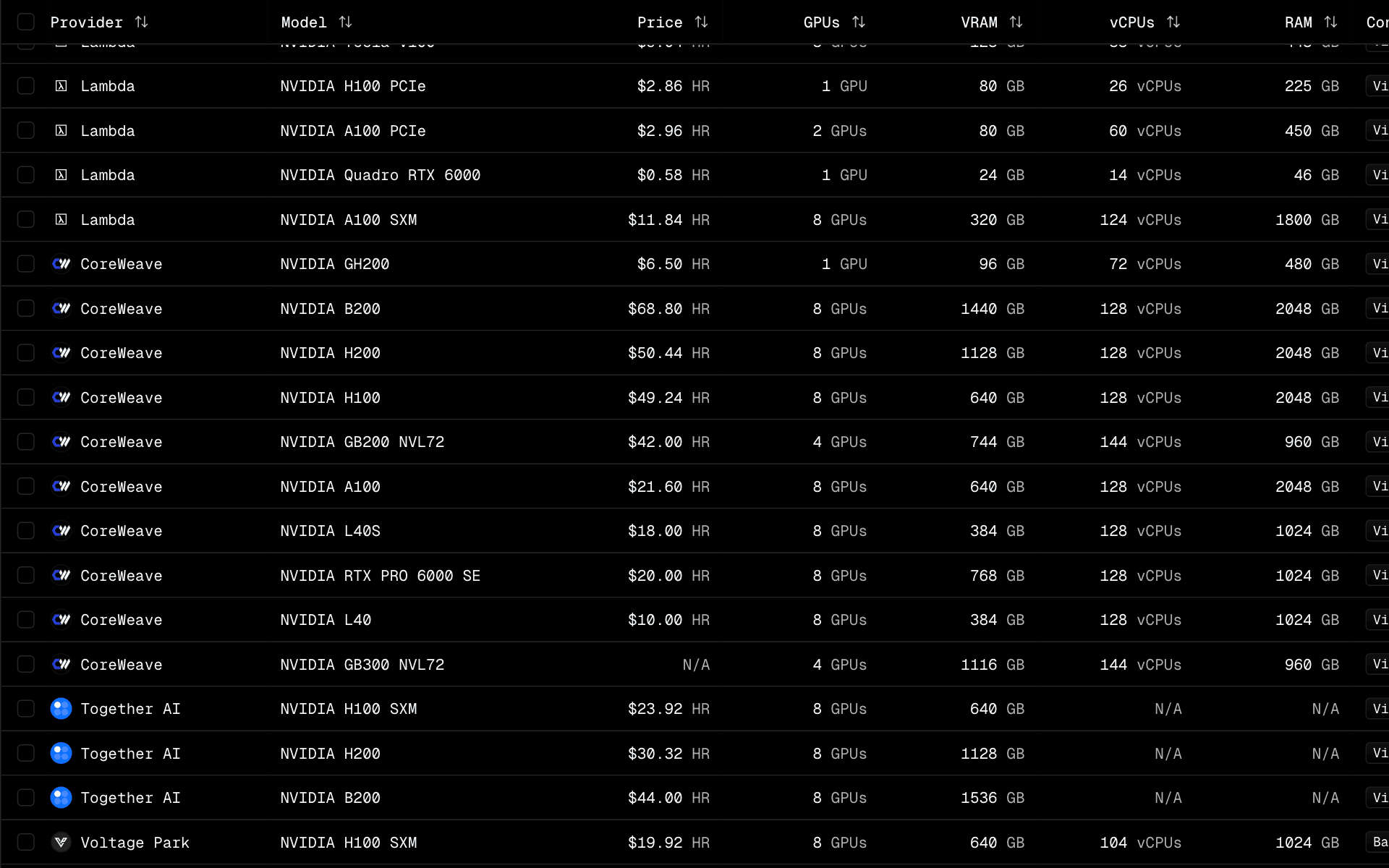

Deploybase empowers developers, ML engineers, and CTOs to move beyond simple cost comparisons. Our platform allows you to deeply analyze the trade-offs between different hardware configurations, specific GPU types, and the efficiency of various LLM deployment methods. Need to know which provider offers the best price per token for a specific model size during peak hours? Deploybase surfaces that critical data instantly. You can compare not only the raw hourly rates but also factor in performance benchmarks where available, ensuring you select the most cost-effective solution for your specific workload, whether you are running high-throughput batch jobs or low-latency serving. This level of granular insight transforms infrastructure planning from a reactive chore into a proactive strategic function, giving you the confidence to deploy, iterate, and scale rapidly knowing you have secured the best possible deal for your compute resources.

Ultimately, Deploybase is your essential command center for AI economics. It removes the friction and uncertainty from cloud procurement, allowing your technical teams to focus their energy where it matters most: building groundbreaking applications. By centralizing real-time pricing and performance data, we ensure you are never overpaying for compute power again. Whether you are a startup trying to stretch every development dollar or an enterprise optimizing massive operational expenditures, having this comprehensive view ensures maximum efficiency and predictable budgeting for all your GPU and LLM deployment needs. Take control of your AI spend today and deploy smarter with Deploybase.