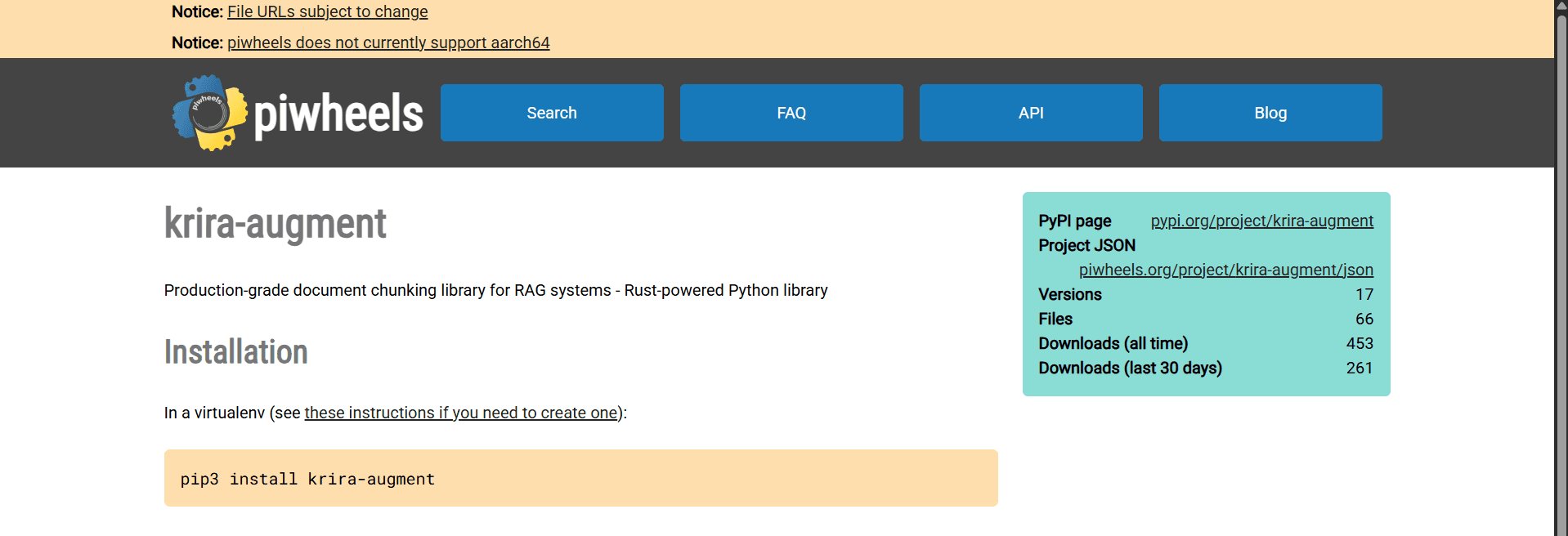

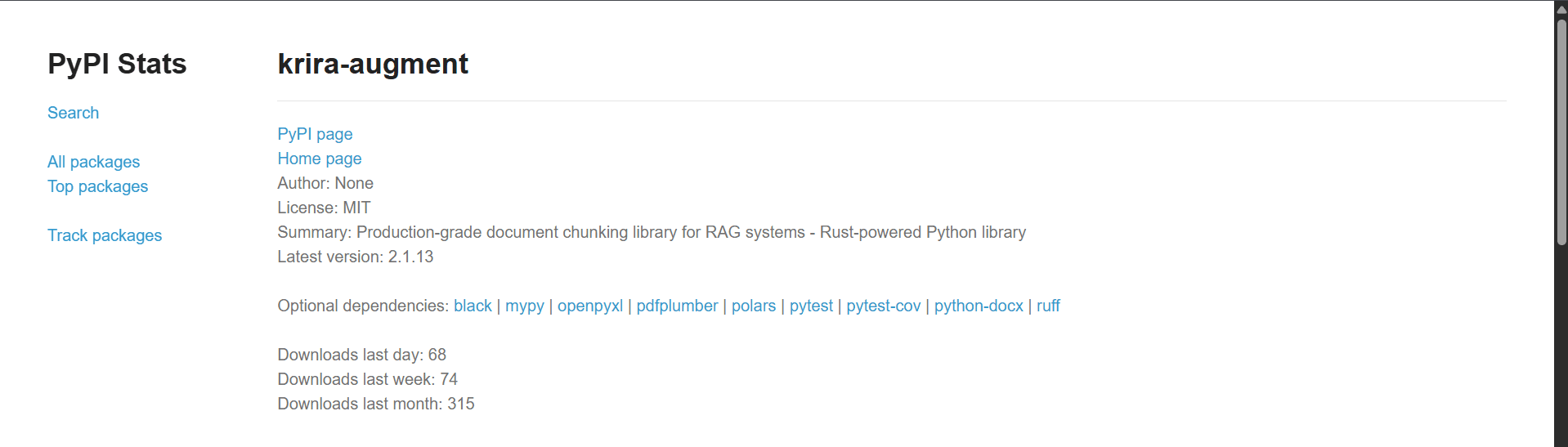

Most RAG pipelines bottleneck at chunking. LangChain's pure Python chunker is slow and memory-hungry at scale. Krira Chunker fixes this with a Rust core + Python bindings: → 40x faster than LangChain → O(1) memory — flat at any scale → Drop-in Python API → 17 versions shipped, 315+ installs pip install krira-augment Built for devs tired of chunking being their pipeline's weak link.see more

Founder

Screenshots

About

Are you tired of watching your sophisticated Retrieval Augmented Generation (RAG) pipelines grind to a halt because of a slow, resource-intensive document chunking step? For too long, developers have accepted that processing large volumes of text means battling with Python-based chunkers that consume excessive memory and dramatically slow down ingestion time. We understand that when you are building cutting-edge AI applications, every millisecond counts, and bottlenecks shouldn't be dictated by basic text preparation. That's precisely why we engineered the 64. Krira Chunker—a revolutionary tool built from the ground up with performance as its absolute priority. By leveraging the raw speed and efficiency of Rust for the core processing engine, while providing a seamless, familiar Python interface, Krira Chunker shatters previous performance barriers. Imagine transforming a process that used to take minutes into one that completes in mere seconds; our benchmarks show it operating up to 40 times faster than traditional solutions like LangChain’s built-in chunkers. This isn't just a minor improvement; it’s a fundamental shift in how efficiently you can prepare data for your LLMs, allowing your entire RAG system to operate at peak performance without compromise.

What truly sets Krira Chunker apart is its incredible scalability, achieved through intelligent memory management. Unlike chunkers that scale memory usage linearly or worse with document size, Krira Chunker achieves near constant time memory complexity, often referred to as O(1) memory usage relative to the input scale. This means whether you are processing a small set of documents or an entire enterprise knowledge base, your memory footprint remains flat and predictable. This stability is crucial for production environments where resource allocation needs to be tight and reliable. The implementation is designed to be a true drop-in replacement: if you are currently using Python libraries for chunking, integrating Krira Chunker is as simple as updating your dependencies and running pip install krira-augment. We have already proven its reliability with over seventeen versions shipped and more than 315 successful installations, demonstrating a commitment to stability and developer satisfaction. Stop letting the foundational step of data preparation become the weakest link in your AI chain. Krira Chunker gives developers the speed and efficiency needed to focus on the complex logic of their RAG systems, not on waiting for text splitting to finish.

This tool is specifically crafted for the modern developer who demands high performance and low overhead. If your work involves large datasets, real-time processing needs, or simply a desire to optimize every part of your machine learning pipeline, the Krira Chunker provides the necessary bedrock for high throughput. It’s about unlocking the true potential of your AI models by feeding them prepared data faster than ever before, ensuring your application remains responsive and cost-effective, even as your data volume explodes. Embrace the power of Rust-native processing within your familiar Python workflow and finally move past the frustrating limitations of legacy chunking methods.