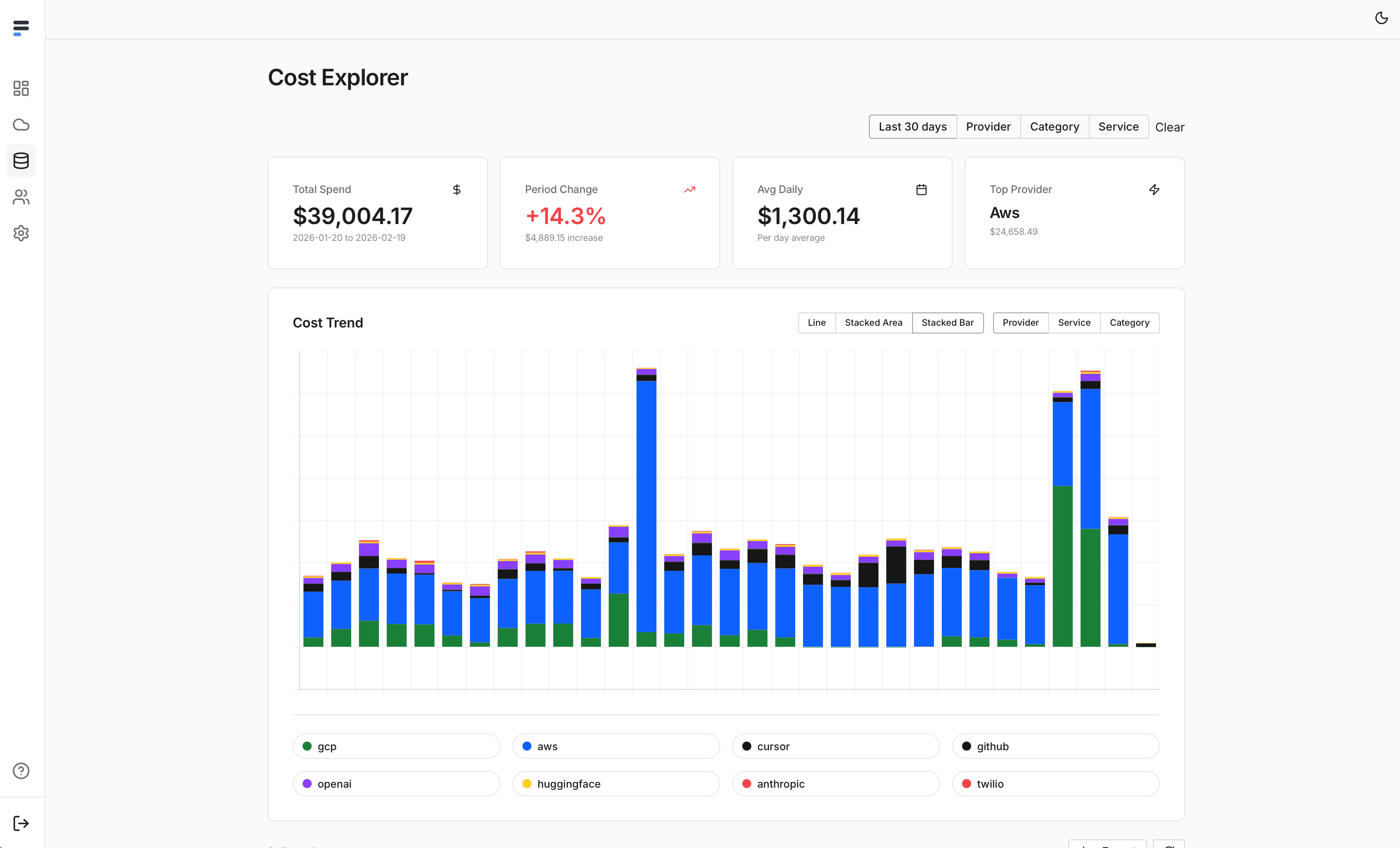

AI LLM Cost Monitoring. Don't get surprised by your bills. Take action before it lands! OpenAI, Huggingface, AWS, Bedrock, Google, GCP, Vertex, Azure, Grok, xAi, co-pilot, Cursor, ++

Founder

Screenshots

About

Are you diving deep into the world of Large Language Models (LLMs) and AI development, only to find yourself looking nervously at your cloud provider statements? You are certainly not alone. The sheer power and flexibility of tools from OpenAI, Huggingface, AWS Bedrock, Google Vertex AI, and Azure can lead to unexpected and sometimes staggering operational costs if left unchecked. That is precisely why StackSpend was created. This isn't just another monitoring dashboard; it's your proactive financial guardian in the rapidly evolving AI landscape. We understand that focusing on innovation is paramount, but that innovation shouldn't come with the constant dread of a massive, surprise bill landing in your inbox. StackSpend gives you unparalleled, centralized visibility across all your major AI service providers, allowing you to see exactly where every token and every compute hour is being spent, ensuring that your cutting-edge projects remain financially sustainable.

StackSpend transforms cost management from a reactive, end-of-month headache into a real time, actionable part of your development workflow. Imagine knowing the moment a specific prompt chain or an unoptimized inference loop starts consuming resources far beyond your budget threshold. Our system is designed to alert you immediately, giving you the crucial window needed to pause, adjust parameters, or switch to a more cost effective model before the meter runs too high. Whether you are experimenting with Grok, integrating co-pilot features, or running heavy workloads on platforms like Cursor, StackSpend aggregates these disparate data streams into one intuitive interface. This comprehensive oversight means you stop wasting time chasing down invoices and start spending more time building, confident that you have a firm grip on your AI expenditure across the entire ecosystem, from foundational models to specialized cloud infrastructure.

Ultimately, StackSpend empowers you to optimize without sacrificing performance or speed. We give developers, project managers, and finance teams the clarity needed to make informed decisions about resource allocation. By providing granular insights into usage patterns across OpenAI, Google Cloud, Azure, and more, you can easily identify which experiments are providing the best return on investment and which ones need immediate tuning. Stop letting the cost of AI be a black box. Take control, maintain your momentum, and ensure that your journey into advanced artificial intelligence is both revolutionary and responsible. With StackSpend watching your back, you can focus purely on the next breakthrough, knowing the bills will always be manageable.